Quick Takeaways

- MIT researchers developed a generative AI system that plans long-term visual tasks with about 70% success, outperforming traditional methods that reach only 30%.

- The system combines vision-language models to interpret images and formal planning software to generate executable action plans, ensuring reliability in dynamic environments.

- It translates visual scenarios into formal planning language (PDDL) files, which are then refined through simulation and iterative comparison, enabling effective generalization to new problems.

- This approach significantly advances visual-based planning, with potential applications in robotics and autonomous systems, and aims to handle increasingly complex scenarios in the future.

MIT researchers have created a new way for planning complex visual tasks. This new method uses artificial intelligence (AI) to help robots and other machines see and understand their environment better. It is about twice as effective as previous techniques.

First, the system uses a special vision-language model to analyze images and predict actions needed to reach a goal. Next, another model converts these predictions into a format that traditional planning software can understand. This process results in a set of files that guide the machine in accomplishing its task.

This new approach has a success rate of around 70 percent. That is significantly higher than older methods, which only succeeded about 30 percent of the time. It also works well with new problems it has not seen before, making it useful in real-world situations where things change quickly.

Yilun Hao, a graduate student at MIT and lead author of the study, explains that this system combines the image understanding power of vision-language models with the precise planning abilities of formal software. Hao adds that the system can take a single image, simulate actions, and produce a reliable plan for long-term tasks.

The research team includes experts from MIT’s AeroAstro department and the MIT-IBM Watson AI Lab. They will present their findings at an upcoming conference. This work builds on past studies that used large language models (LLMs) for reasoning. However, those models struggle with visual inputs, which led the team to explore vision-language models (VLMs).

VLMs are strong at understanding images and text but often stumble over spatial relationships and multiple steps. To solve this, scientists combined VLMs with formal planning tools, creating a system called VLM-guided formal planning (VLMFP).

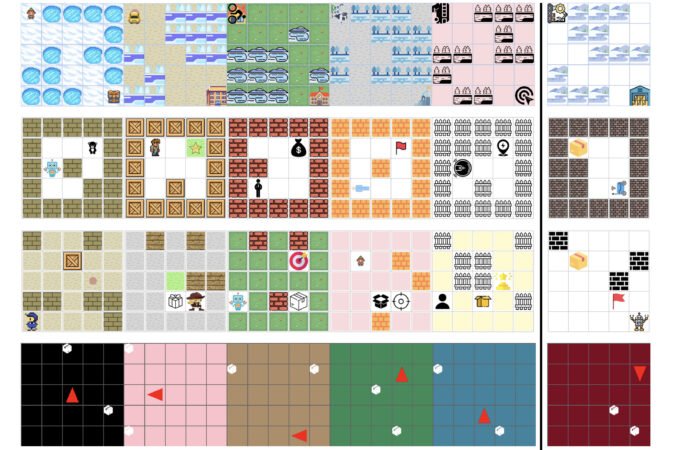

VLMFP works in two steps. First, it describes the scene in words and simulates actions. Then, it uses these descriptions to generate files for a classic planning software called PDDL. The software calculates the best sequence of actions to complete the task, improving the plan by comparing it with the simulation.

The system can generate plans for various environments without needing detailed instructions each time. This makes it adaptable for different visual tasks, such as robot assembly and multi-robot teamwork. The researchers trained VLMs to understand scenarios without memorizing patterns, which helped the system succeed in most tests.

Overall, VLMFP achieved high success rates in multiple planning tasks and excelled at solving problems it had not encountered before. The team is now working on handling even more complex situations and reducing errors from AI hallucinations.

This breakthrough marks a step forward in integrating AI with robotics and automation. By enabling machines to interpret and plan based on visual input more effectively, the technology opens new doors for real-world applications in industries like manufacturing and logistics.

Expand Your Tech Knowledge

Dive deeper into the world of Cryptocurrency and its impact on global finance.

Stay inspired by the vast knowledge available on Wikipedia.

QuantumV1