Essential Insights

-

PhysicsGen Revolutionizes Robot Training: MIT’s PhysicsGen enables robots to learn efficient task execution by generating up to 3,000 simulations from just 24 human demonstrations, overcoming traditional data collection challenges.

-

Enhanced Performance Through Simulation: The system significantly improves robotic accuracy and collaboration, achieving an 81% success rate in task execution and enhancing cooperative tasks by up to 30%.

-

Scalability and Generalization: PhysicsGen allows the repurposing of past datasets for different robotic systems, making specialized robotic data broadly applicable and reducing the need for constant human retraining.

- Future Potential in Diverse Learning: Researchers aim to extend PhysicsGen by incorporating reinforcement learning and advanced perception techniques, paving the way for robots to perform various tasks beyond their initial training capabilities.

MIT Develops Simulation-Based Training for Dexterous Robots

MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) has introduced “PhysicsGen,” a groundbreaking system designed to enhance robot training. This innovative approach generates tailored training data that empowers robots to perform tasks efficiently.

Traditionally, teaching robots has required vast amounts of instructional data. Engineers often faced challenges in transferring knowledge across different robotic systems. They could train robots by teleoperating them, but this method proved time-consuming. Additionally, learning from unstructured internet videos lacked the specificity necessary for complex tasks.

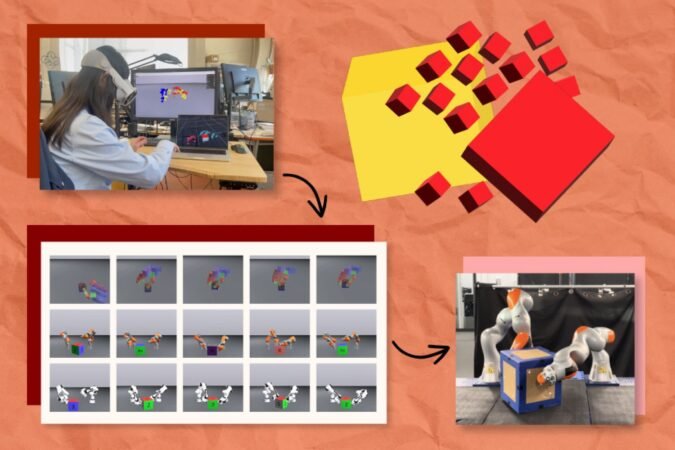

PhysicsGen changes this paradigm. The system takes a few dozen virtual reality (VR) demonstrations and transforms them into nearly 3,000 simulations per robot. This significant increase in training data enables robots to learn optimal movement strategies for various tasks.

The process operates in three steps. First, it utilizes a VR headset to track human hand movements as they manipulate objects. Next, these interactions are mapped onto a 3D physics simulator, illustrating key points of motion. Finally, PhysicsGen employs trajectory optimization, simulating the most effective motions for completing specific tasks.

Lujie Yang, a lead author of the project, emphasized that PhysicsGen eliminates the need for humans to re-record demonstrations for each machine. “We’re scaling up the data in an autonomous and efficient way,” Yang explained. This approach allows robots to adopt diverse methods for completing tasks, such as repositioning items in homes or factories.

Early experiments indicate PhysicsGen’s success. For example, a virtual robotic hand achieved an 81% accuracy rate in rotating a block after training with PhysicsGen’s extensive dataset. This marked a 60% improvement compared to traditional methods relying solely on human demonstrations. Similarly, real-world robotic arms also displayed enhanced collaboration in tasks, with a 30% increase in task success rates.

The technology could transform various industries. For instance, it might enable two robotic arms to work together in warehouses or homes, efficiently sorting items. Yang noted that PhysicsGen can even repurpose older training data, making it relevant for new robotic systems.

Looking ahead, researchers aim to diversify the tasks robots can handle. "We’d like to teach a robot to pour water when it’s only been trained to put away dishes," Yang shared. The ultimate goal is to create a robust foundation model for robots that learns from both structured and unstructured data.

PhysicsGen highlights the potential of AI in revolutionizing robot training. As this technology advances, it promises to shape how machines interact with their environments, ultimately expanding their capabilities.

Continue Your Tech Journey

Learn how the Internet of Things (IoT) is transforming everyday life.

Access comprehensive resources on technology by visiting Wikipedia.

QuantumV1